We’ll now share the feedback to the other participants. Even if not awarded prizes, we hope this feedback helps you in your journey as a model builder!

@innotech-ai

juror 1

This writeup starts off in a promising manner, describing their approach in a storytelling manner, but unfortunately, seems to basically be incomplete.

juror 2

- Interesting analysis on modelling juror behavior

- I like their baseline-first strategy

- Didn’t achieve great results

juror 3

I liked how they tried to think outside the box, and was reading eagerly hoping they had something interesting to show for it … but they didn’t

juror 4

Writeup reflects the short timeframe that the modeler had. I would like to see their results, with a bit more time.

The techniques used here are pretty standard, nothing really sticks out.

juror 5

I think it’s awesome that these folks jumped in with just 5 hours left in the competition and gave it their all, but the writeup is hard to follow.

@kwachunling

juror 1

Very short writeup with no data exploration and only weak motivation to their (albeit very interesting) approach.

Would have like a lot more discussion of the idea of looking at the ecosystem as a graph, and the corresponding use of GNNs.

Left me wanting more.

juror 2

- Great approach using GNN! Conceptually makes sense to model dependencies as graphs.

- Write-up focuses on the GNN choice and the code, but didn’t explain what actions were taken after it failed to generalize (change hyperparams, etc)

juror 3

This feels very LLM-y, I don’t think they had any intuition for how to adjust the hyperparameters or interpret the quality of their predictions

juror 4

love the idea of using a GNN here, with the idea that “Ethereum ecosystem is a graph” (in fact, this idea is basically baked into the structure of the data).

Unfortunately, most of the writeup is technical and focuses on how the GNN is implemented. It would be nice to see more about the outcomes – what did the GNN predict, and/or what its performance tells us about the jurors’ mindset.

juror 5

I really appreciate the argument that we need to think about these codebases in a more network-oriented way, rather than as isolated entities. Ultimately it’s not clear to me if that positionality necessarily means that GNNs are the right tool, but still a good submission

@dipanshuhappy

juror 1

This is another one which doesn’t try to predict based on juror behaviour but decides to use some framework utilized by Robinhood. There is no motivation for this choice, and the description of the application of this technique is also light on details

juror 2

- Creative framing of the problem using Relentless Monetization

- LLMs were used (and it makes sense to some extent), but the description lacked details on what worked/didn’t work and comparisons with simpler ML-based approaches

juror 3

- clever approach … I’d love to see the data they got back from their prompting … and the comparisons among LLMs

- I’m not convinced this is the RIGHT prompt, but this is definitely an outside the box approach

juror 4

I like the novel application of an existing technique (“Relentless Monetization”, who knew?), but it feels disconnected from any juror data.

Using LLMs is an intriguing idea, but lacks explainability. I would want to see a model that generalizes to a different version of the data set.

juror 5

This writeup is not convincing. I think using LLMs this heavily without exploring their drawbacks isn’t great. It’s especially not great when you pair that with another untested idea (in this space at least) (“relentless monetization”). Below average.

@lisabeyy

juror 1

Short writeup with very few details. It does cover the entire process and reasoning, and is honest and clear. Despite being short, it at least motivates the choices made and explains the results.

juror 2

- Good motivation and explanation of utilized techniques

- Nice ablation study (kind of feature importance although not from model itself)

- Didn’t take juror heterogeneity as part of model

juror 3

- Smart, I like how they had a good intuition for how to approach the problem and how to sanity check the results

- They did a good amount of fine tuning as well

- Would have liked to see this person team up with Zoul

juror 4

appreciate the detailed writeup, as well as the humanity/humility in the author’s prose. There’s clear evidence of authentic engagement with the data, with feature engineering and other methodological choices well-explained.

juror 5

This is an average writeup, things are explained well enough but it’s also very terse and doesn’t really increase my understanding of deep funding.

@alertcat

juror 1

While detailed in terms of steps and mathematical details, it is too terse and telegraphic in nature to be a good writeup. Very little text description.

juror 2

- This shows that author understood the mathematical nature of competition very well, and also presented proofs of why his model fits the competition criteria

- Pure mathematical model, fast and doesn’t require training

- Write-up could be made less technical and easier to read

juror 3

- Similar feedback to lisabeyy – they knew a good tool for the job and applied it well

- I personally like these straightforward technical approaches

- That said, I don’t like BT-based approaches because they are based on juror features as opposed to repo features

juror 4

The writeup probably doesn’t do justice to the approach. The details are interesting to me (hard-math-y person), but almost read like filler.

I would like to see way more effort expanding the last few sections, which offer results and interpretability. It feels off to me to treat this as a pure optimization problem when the data set is so rich with potential insights.

juror 5

I wish this writeup had more natural-language explanations/ commentary. The approach might be cool, but I think the writeup is average. It’s not very illuminating, besides letting me know that maybe “direct weighted least squares” is an interesting technique to look into.

@rhythm2211

juror 1

Part 1 & 2 of the writeup are very detailed and provide details on exploratory data analysis and then they seem to run out of steam on 3 & 4, which are very short paragraphs with almost no details. I would consider this submission incomplete.

juror 2

- Liked the extra effort to fetch missing features via Github API

- Write up well written, detailed and easy to follow

- Didn’t find many metrics (maybe inside Jupyter notebook)

- I expected some more BT discussion

juror 3

- Sigh. There’s potential here, but this feels like exploratory data analysis with no idea at how to arrive at a conclusion.

- The notebook feels like a bunch of reused snippets from other competitions and LLM codegen (pandas is imported 43 times)

- That said, the data viz is pretty and is the type of stuff you’d expect a junior data scientist to cook up while trying to learn about a dataset

juror 4

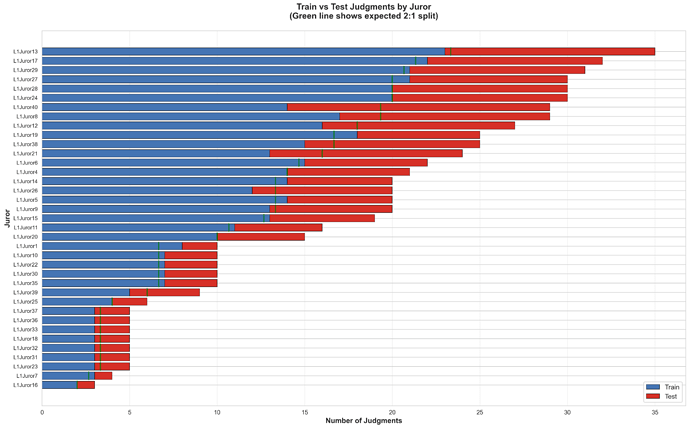

I liked the visuals, but didn’t always agree with the interpretation. (In particular, comparisons-per-jurordidn’t seem terribly imbalanced to me.)

What’s missing (in my view) is,: a connection between the lessons learned from the EDA, and the eventual modeling efforts. The modeling details are very sparse, in terms of both design choices and insights gained.

juror 5

I think that going with a model-per-juror approach is pretty interesting, but I don’t understand the claims about juror inconsistency. In particular, if a juror tends to use higher ratings, we shouldn’t be surprised that the standard deviation of their ratings is higher, and in any case I don’t think standard deviation and “inconsistency” are the same thing. Inconsistency to me denotes making the same choice differently over time (i.e. sometimes i prefer A to B and sometimes I prefer B to A), and having a high standard deviation is like saying “I like A a lot more than B, and B a little more than C”, which isn’t the same.

Average or below average.

@khawaish1902

juror 1

Detailed writeup, but relies too heavily on code, math and visualizations without enough text to explain. But it is at least detailed, and covers the entire effort.

juror 2

- Detailed write-up, problem understanding and clear approach

- Nice that used several models and compared them

- Limited quantitative validation and discussion

juror 3

- Top 5

- Good, I like how the person tried a variety of different approaches and compared them … this reflects a maturity of thought that has been absent in most other submissions

- Their conclusion is also spot on: “Direct optimization of the competition objective outperformed sophisticated feature engineering when training data was limited.

juror 4

The writeup has a lot of information, but doesn’t really offer reflection or insight.

My question is “What do we know after this modeling effort, that we didn’t know before – about the ecosystem data itself, or modeling with ecosystem data in the future?

juror 5

Not sure that some of the visuals are really necessary (breakdowns of which languages projects used? Text clouds? These don’t increase my understanding of the problem space). Aside from that, I couldn’t find much new / exciting info in this writeup.

@Oleh_RCL

juror 1

This is a strange writeup which seems to fail to identify the purpose of the contest, which was to mimic and therefore scale the jurors decisions, but rather to try to ‘do better’ than them! It is very brief and just a broad overview with basically no details.

juror 2

- Discussion makes sense, different models, data enrichment

- I lacked some technical analysis of features, rationale for choices

juror 3

- Puzzling. The write up is sparse and the model is a single file that runs on local/private data with no output charts, so I have no way of reviewing any of their decisions

- It could be good but I have no way of verifying

juror 4

I think the writeup is clear, and the process intriguing – for instance, augmenting the data with social media and GitHub commits.

The problem I have is that the code is massive and hard-to-parse. This would be a much stronger writeup if the prose elements clearly connected to the code, and/or if the code itself were easier to read.

juror 5

An above-average writeup, but I don’t think there’s too much new here that pushes our understanding of deepfunding forward. Feature engineering and model ensembles are pretty par for the course in these competitions. I do think using pagerank is a cool idea, though.

@Limonada

juror 1

Detailed explanation of the chosen approach, but lacking the data exploration and objective justification of the approach compared to other approaches. Would have been good to see how they went from the data exploration, to a few approaches leading to the final choice.

juror 2

- Also suspect write-up is LLM generated

- It lacks a bit of technical demonstration, as other write-ups have, like feature importance or code snippets

juror 3

Frustrating. This represents a novel approach to the problem and I would love to see code / charts / residuals / ANYTHING technical but there is NOTHING linked in the submission

juror 4

The writing here feels particularly flat, lacking detail. 8_suspect it’s LLM-generated. The methodology is actually quite interesting, but there needs to be more explanation. It would be great to have something more: visuals of the architecture, insights from the final model, – something.

juror 5

I appreciate the conceptual framing of PGF as an ongoing process. That feels like an important/unique contribution. But the writeup is also a little heavy on the jargon and a little light on data/ statistics. An illustrative example would have also helped me decode some of the author’s claims. Still above average, though.

@jpegy

juror 1

Somewhat breezy and not very strongly motivated or supported. Definitely a concerted approach trying to generate synthetic data to solve the data scarcity problem, but would have liked a statistical analysis to support the thesis. Very honest about the sub-optimality, which was the redeeming factor.

juror 2

- Didn’t go into too much detail on the approach taken

- RIghtfully acknowledged problems on dataset, interesting to use LLMs to generate synthetic data to tackle that

juror 3

- Great write-up and would have been really interested to see more data / outputs from their attempts.

- I feel like there’s a notebook / repo or set of charts that could have easily put this submission into the top 5 for me

juror 4

Excellent reflection on work. The approach might be promising if further refined.

It’s hard on me personally to work with text-only writeups: surely there’s some visual, number, etc that could be given in summary? Anyone can write any words they wish, and 8_feel credibility is added by including some alternate (and perhaps harder-to-generate) representations.

juror 5

This is an above average write up that explains some of the pitfalls of over-indexing on LLM generated data. Even though the idea of using a bunch of LLM data feels sketchy / weird to me, i’m glad jpegy did it because it increases our understanding of how things work both with LLMs and with the competition. Above average.

@MavMus

juror 1

Extremely disjointed and short descriptions, with large code blocks expected to explain themselves. Very low effort.

juror 2

- explanations, not very good structure

- Reasonable ideas that should have been better explored

juror 3

- A bit light, though I appreciate their attempt to enrich with some additional data about the utility of the repo

- Feels like a modest weekend effort but nothing stands out

juror 4

Clear description of process, but more care needs to be taken to ensure reproducibility.and interpretability when working with LLMs.

The writeup would benefit from at least one diagram to give an alternate representation of the model architecture

juror 5

I didn’t find this writeup to be very insightful, and didn’t think the code was explained well. Below average.

@Todser

juror 1

This one is a head-scratcher. It literally describes a multi-turn session with a chatbot where the contestant tried to literally guess the scores themselves. They got very bad results which is not surprising. This does not feel like a serious submission, and the attempts to make the writeup entertaining do not compensate in my opinion.

juror 2

I don’t see a model/code nor attempt at building one, just reflection on how to theoretically tackle this project

juror 3

- I really really wish this person had some structured data they captured from each of these iterations, because I think this is an intriguing approach … he is basically brute forcing the RLHF approach on a very narrow use case

- Not Top 5 but definitely a creative person at work!

juror 4

Intriguing idea, and i think that it’s possible this “Iterative Hypothesis Testing” concept could be successfully adapted to chatbot/agentic analysis approach (perhaps combined with more traditional quantifiable Machine Learning methods).

It seems like the approach was reasonably successful, but it’s kind of ad hoc – not likely to generalize well without more refinement.

juror 5

I appreciate the author’s interest in just thinking through the problem via simple heuristics and trying to maintain some level of human understandability, and I think the failure of this approach does shine a light on how hard deepfunding is (or how careful we need to be about collecting juror data!). However, I think this is the only thing going for this project, making it an average or slightly below average writeup.

@clesaege

juror 1

While an interesting and very well described approach of designing a prediction market, I wish this submission contained the basic data exploration which then could motivate the innovative choice of the contestant. As such, while it is indeed very well explained and structured like a very brief research paper, I felt it was a high level abstract idea and not as much of a roll-up-the-sleeves and dig into the data approach. I think that would have been very useful and could have motivated at least in part the approach taken.

juror 2

- I am obviously biased here, but it’s been proven that, given proper incentives, PMs can act as great aggregators of information. Thus one could use such mechanism to find out the dependencies (similar to an ensemble of the best models)

- Some problems on PMs however persist, e.g. low liquidity for participation, expert knowledge required, etc

- Overall like the idea very much, but as a submission I’m ranking ML/LLM approaches higher because they were more closely aligned with goals

juror 3

- Not sure?

- Cool! But I’m not sure how to score this though. Wasn’t Seer also a part of the round design?

- If not, then this is definitely a top 5 approach. If so, then I’d rather clear the way for independent / non-affilitated submissions

juror 4

In My Top 5

Intriguing use of prediction markets, to compete with the AI/Machine Learning models.

It’s somewhat difficult to compare, due to the difference in nature. The writeup is through, and the visuals help tell the story well.

juror 5

Prediction markets definitely have their strengths but I can’t get around the clear performativity issues that would come from using prediction markets as the sole mechanism to give away money (as opposed to testing them against a dataset). If you can spend money to adjust the weights of your project in a market, and then those weights determine some payout to that project, that seems like a clear opportunity for gaming the system. Still a cool writeup though, and I appreciate the ideas. Top 5

@duemelin

juror 1

This is clearly LLM-generated!

Maybe that is not a deal-breaker in an ML/AI competition, but the contestant has not even bothered to remove the LLM’s voice:

“Here is a ready-to-post write-up for the forum, incorporating the results from your script’s output.

juror 2

- LLM-generated

- If the code was there, then maybe I could have provided a better analysis

juror 3

Not much to add to what other reviewers have said about this submission

juror 4

The comment “Here is a ready-to-post write-up for the forum, incorporating the results from your script’s output.” seems a bit strange. LLM output?

To be honest, i think the overall writeup is fine. There isn’t much in the way of technical innovation or subject matter insight, though. i think “strip away context and do pure mathematical optimization” is an OK approach, if the optimization is well-done. This needs more explanation of the underlying optimization technique.

juror 5

I definitely did not love how the author forgot to remove the trimmings from the LLM response he copy-pasted into this forum post. Aside from that, it didn’t feel particularly insightful.

Below average.

.

.

).

). or pressing

or pressing