An astonishing amount of all deployed Ethereum bytecode is used for bitmasking. WETH9 is 32.9% bitmasking. The recently deployed AAVE V4 Core uses 23.3% of its bytecode for bitmasking. Working from the zellic dataset of all unique ethereum contract bytecode collected in 2025, one pattern for bit masking out a 20-byte addresses is responsible for 8.16% of all bytecode on ethereum. Adding next the next three most common bitmasking patterns bring the total up to 10.4% of all bytecode. Newer versions of the solidity compiler often interleave bitmasking in with other instructions, which means to actually track bit masking requires static analysis of each contract. However, I believe that the end total is probably somewhere between 12% to 20% of all unique contract bytecode.

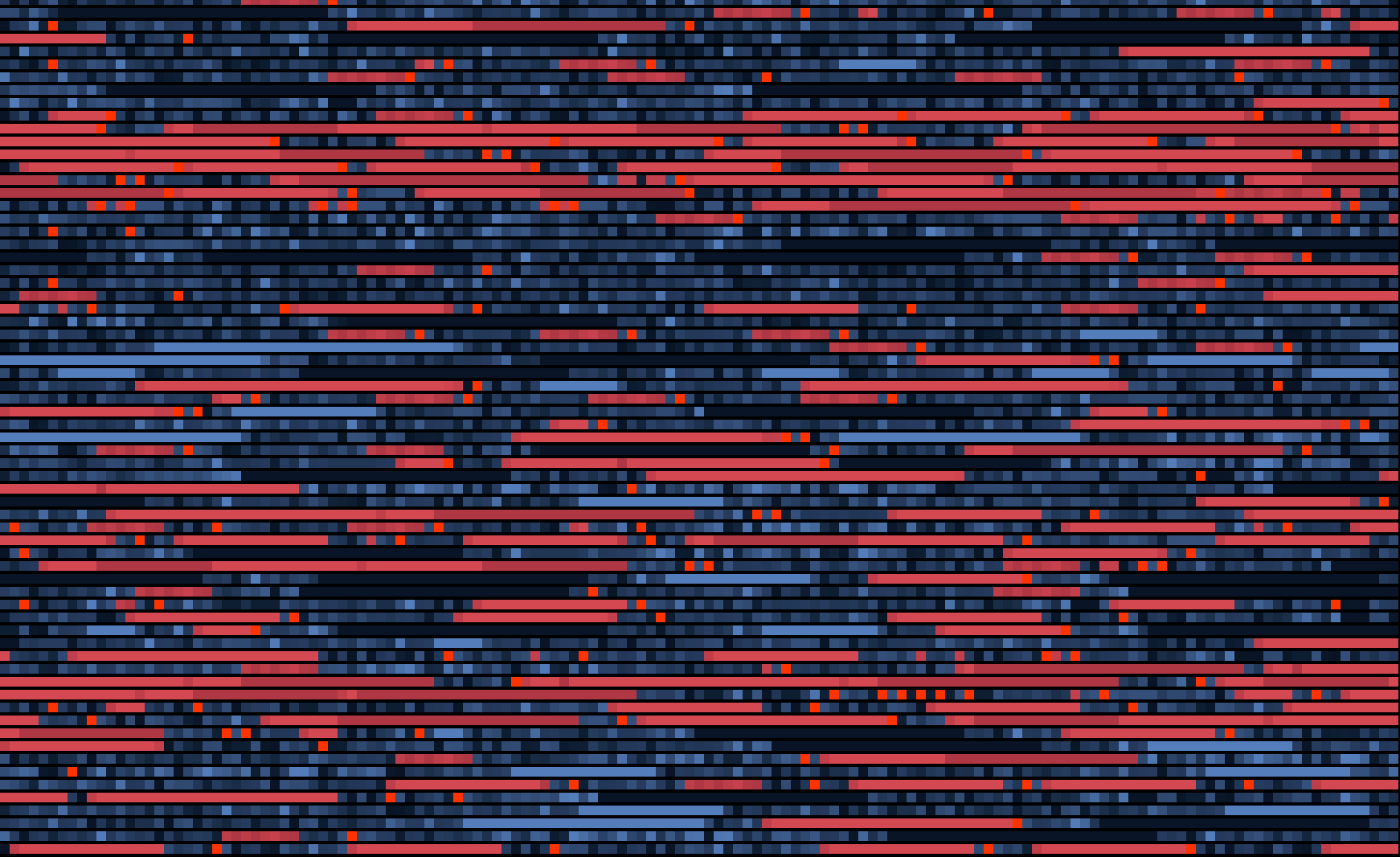

Visualization of a portion of AAVE V4 core bytecode. Bit masking operations marked in red. 23.3% bitmasking:

Why is bitmasking such a huge part of EVM deployed bytecode? It’s because the stack is made up of 256 byte values, and when loading types that are smaller than that onto the stack, the high bits need to be cleared after loading. This applies to packed data from storage or memory, and to untrusted data from calldata. Contracts that do a lot of address use get hit hard (which is most contracts), as well as contracts that optimize their storage use (which is most contracts by prominent teams.)

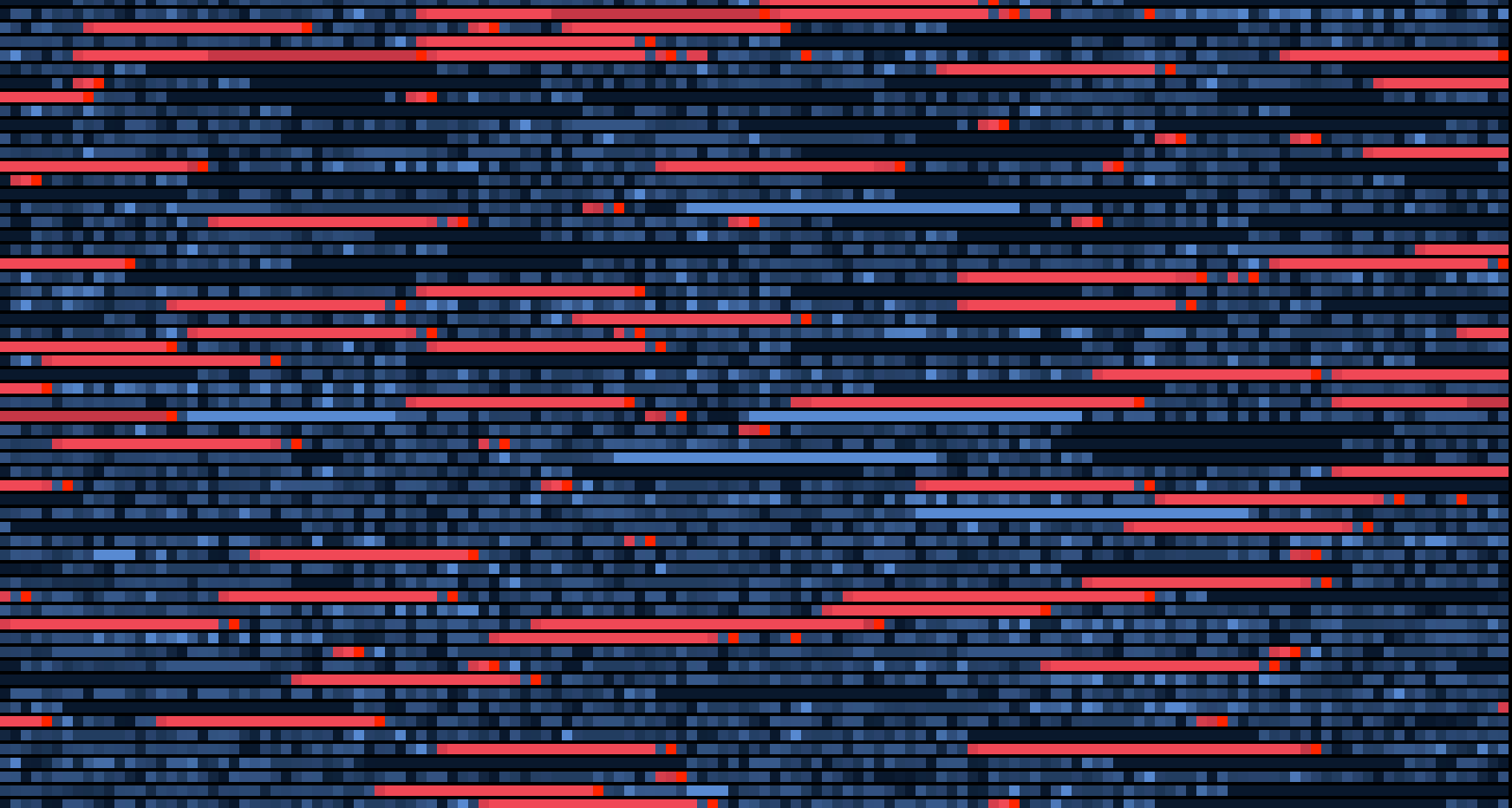

Visualization of a portion of USDC bytecode. 14.7% bitmasking:

The two common approaches to bit masking are other sides of the tradeoff between of smart contract size vs runtime gas usage.

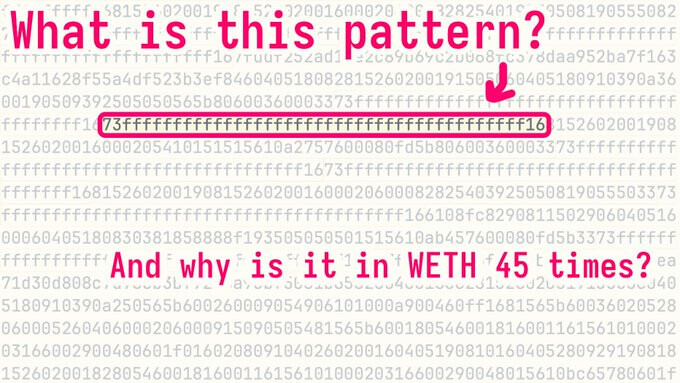

The most gas efficient approach is to just PUSH the bitmask, then AND. For a boolean, this fine, 3 bytes, 6 gas. The most common mask bytecode used, 0x73ffffffffffffffffffffffffffffffffffffffff16 masks an address, and is 22 bytes long, 6 gas. Sometimes when using packed data from storage, a full 32 bytes will be pushed, for 34 bytes, 6 gas.

The storage efficient approach is to compute the mask by shifting a bit and then doing a subtraction by 1 to turn all the lower bits on. This results in any number of right aligned bits for a fixed cost. This pattern looks like PUSH1 1, PUSH1 1, PUSH1 160, SHL, SUB, AND. This makes an address (or any other right aligned mask) be 9 bytes long and take 18 gas.

As said before, Solidity may spread these opcodes out and interleave them with other operations.

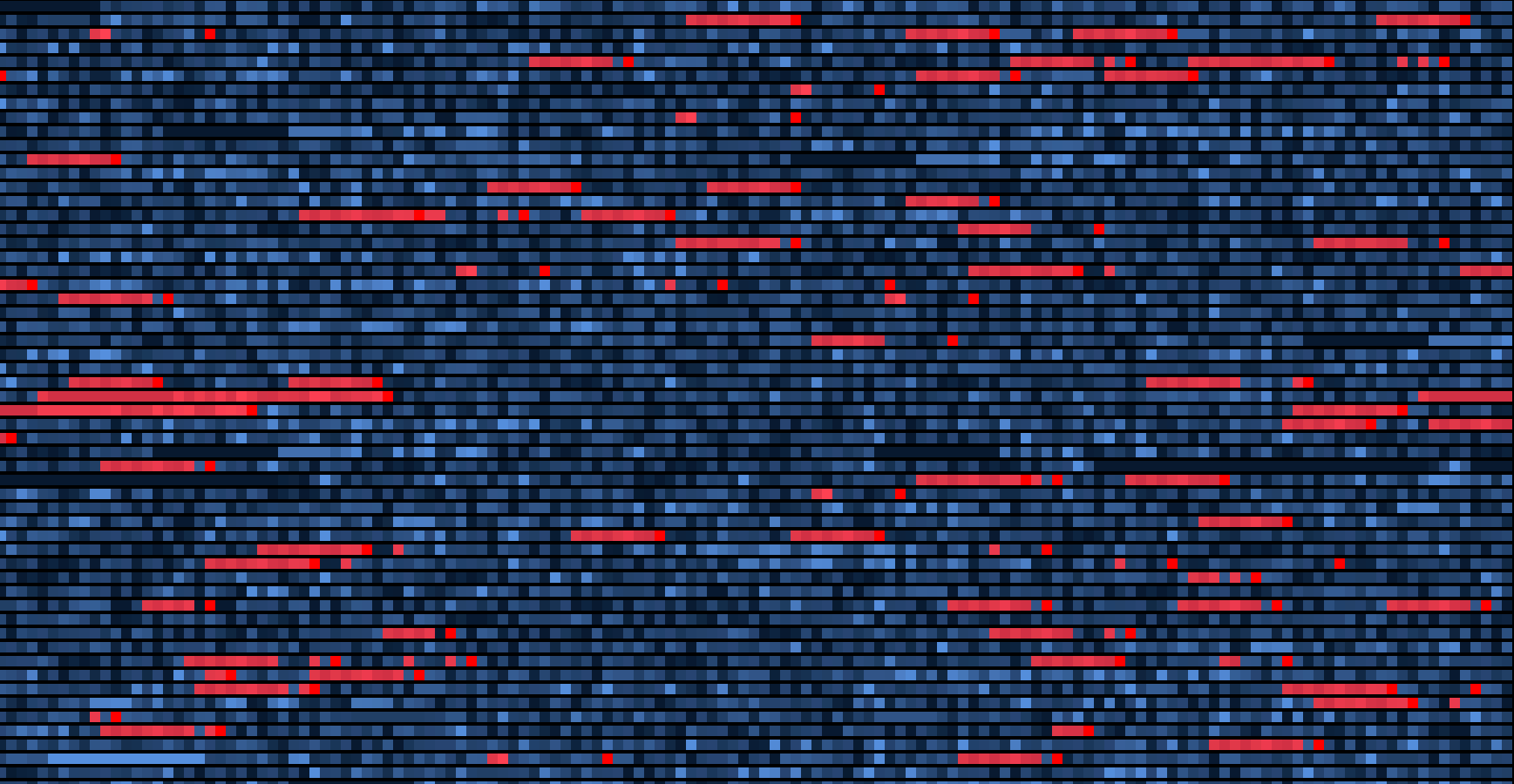

Visualization of a portion of an early deployed Morpho Blue contract, using the high gas, low space bitmasking, and not much storage packing. 6.3% masking bytes:

If we wanted to improve this, what could we do?

- The absolute silliest thing would be to add a

MASKADDRESSopcode that the keeps the rightmost 20-bytes of the stack. This would create the biggest savings, since address masking is both the most common masking, and one of the largest in terms of bytes, and would turn it into 1 byte, 3 gas. - We could add

MASKBITSopcode that takes immediate values, in EIP-8024 style. This would turn address masking into 2 bytes, 3 gas, but also work for other sizes of right aligned bytes (for example, 128 bit numbers). Immediate values certainly increase the effort of working with bytecode, but if EIP-8024 was already in place, this would share code. - We could add a

MASKBITSopcode that takes a value from the stack. The rightmost byte of this would be number of bytes to keep, and the second byte would be an optional shift left of the mask. So to mask an address would bePUSH1 160, MASKBITS, 3 bytes, 6 gas. To mask a left aligned uint128, would bePUSH2 128 160, MASKBITS, 4 bytes, 6 gas. This has the advantage of being general and a simple opcode to implement and work with. - Without making EVM changes, the current compact pattern used by the solidity compiler could be improved, from 9 bytes, 18 gas to 4 bytes, 9 gas by using

MLOADto select a slice of prestaged memory. The standard solidity preamble would move the free space pointer further on, then add aZERO, NOT, PUSH1, MSTOREto place an all on set of 256 bits after the reserved zero memory slot, and followed by another 256 bits of zero memory. This would allow selecting any byte aligned, left or right aligned mask just by loading the correct range of memory withPUSH1, SLOAD, AND.

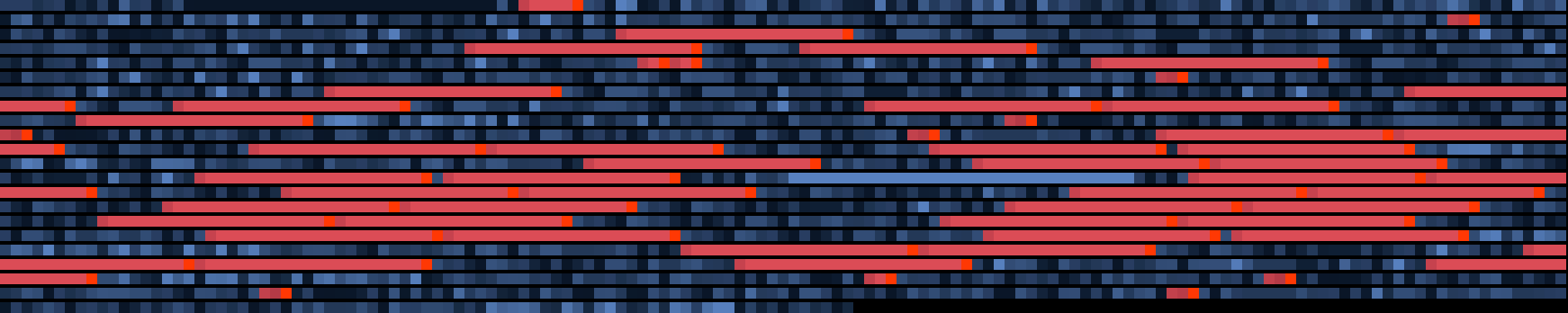

Visualization of the entirety of WETH9. The solidity compiler decided to double many of the masks. 32.9% bitmasking:

Your thoughts on these? There’s room here for around a 9% improvement in effective contract sizes, as well as much cleaner bytecode. Or should we leave things the way they are?