Hi Magicians,

This is a small R&D thread (separate from my AA / SmartAccount work). I’m exploring a UX-oriented hypothesis:

Future “liquidity” may increasingly manifest not only as price, but as the movement of meaning — i.e., contextual commitments that people reference and aggregate over time.

I’m prototyping an onchain “instrument” (not a finished DApp) where users can:

- commit “contexts” (hash + URI),

- commit structured “declarations” as canonical keccak256 hashes,

- aggregate them into epochs (Merkle root finalization by an author),

- and redeem via Merkle proofs to mint a commemorative NFT.

Repo / R&D doc:

GitHub - cancan007/LCG_contracts (see README_RND.md)

Article I organized about this content.

Context Observatory (R&D): Observing Meaning Movement Onchain Without Turning It Into a Score

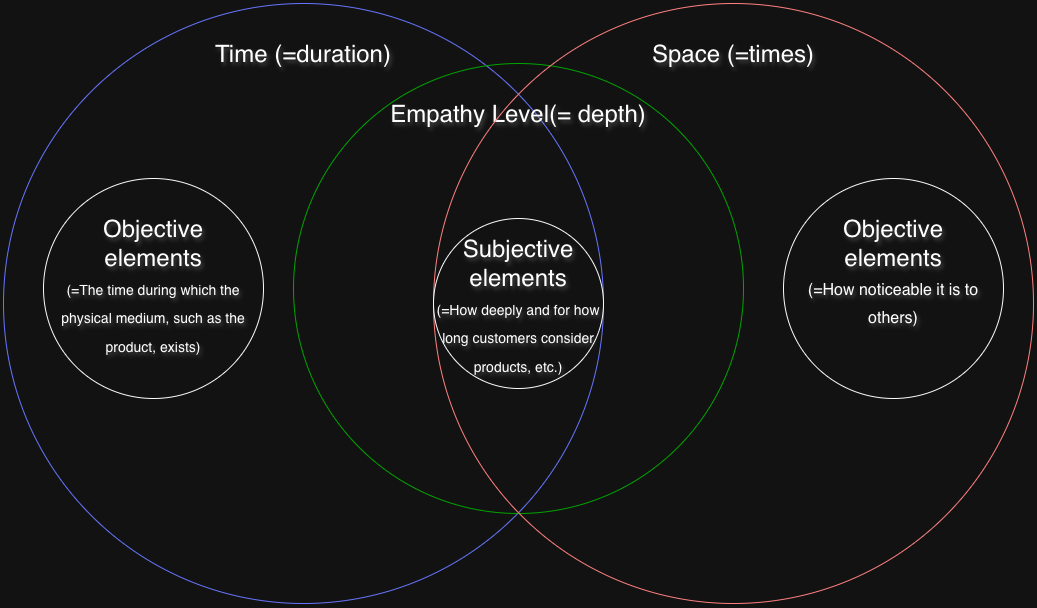

Visual lens (Time / Space / Empathy depth) — the conceptual model behind the experiment:

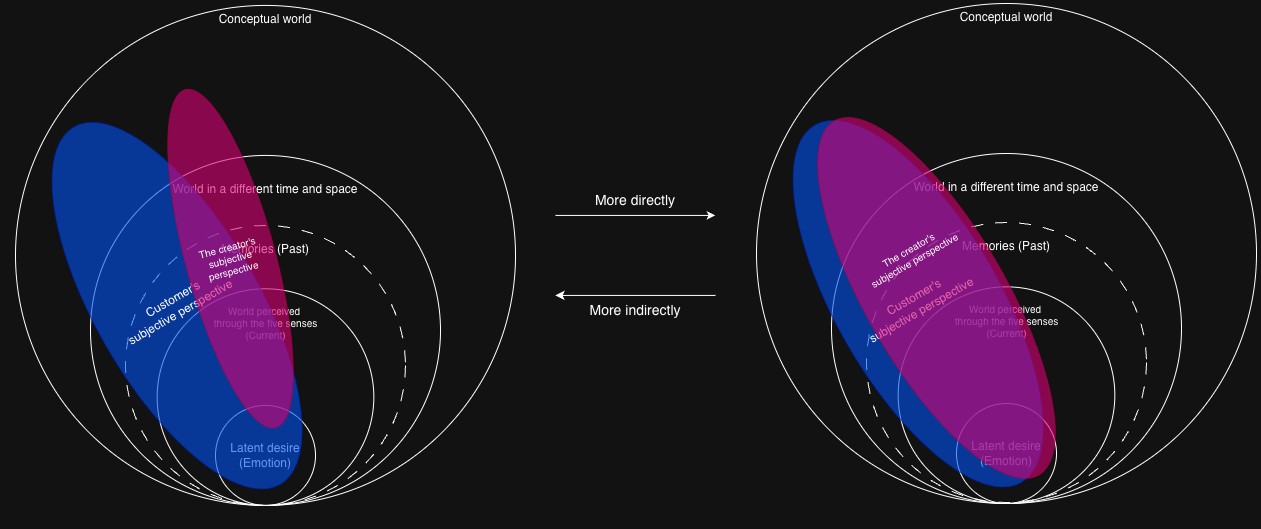

(Optional additional diagram: direct vs indirect background alignment)

What I’m not trying to do (to keep scope tight)

- Not proposing an identity / reputation scoring system.

- Not trying to define a universal “value metric”.

- Not claiming onchain meaning should be globally ranked by the protocol.

The question (feedback I’m specifically looking for)

If we treat these as UX primitives (timestamped commitments + later aggregation), what is the minimum acceptable anti-spam / abuse-control boundary you’d require?

For example, which baseline would you consider reasonable:

- fee-only (natural cost),

- rate-limits,

- stake / deposit with slashing,

- allowlist / attesters,

- offchain moderation for visibility + onchain neutrality,

- something else?

If you’ve seen prior art (EIPs / existing protocols) that tried similar “context / meaning / declaration” primitives, pointers would be hugely appreciated.

Thanks!